slembcke

Enhanced Collision Algorithms for Chipmunk 6.2

There are some notable limitations with Chipmunk’s current code that a lot of people run into. Deep poly/poly or poly/segment collisions could produce a lot of contact points with wacky positions and normals which were difficult to filter sensibly. This was preventing me from implementing smoothed terrain collision feature that I wanted (fix the issue where shapes can catch on the “cracks” between the endpoints of segment shapes). Also, until recently line segment shapes couldn’t collide against other line segment shapes even if they had a nice fat beveling radius.

They can now thanks to a patch from LegoCylon, but they have the same issues as above, generating too many points with wacky normals. I also had no way to calculate the closest points between two shapes preventing the implementation of polygon shapes with a beveling radius. Yet another issue is that the collision depth for all of the contacts is assigned the minimum separating distance determined by SAT. This can cause contacts to pop when driven together by a large force. Lastly, calculating the closest points is also a stepping stone on the way to get proper swept collision support in Chipmunk in the future.

For some time now, I’ve been quietly toiling away on a Chipmunk branch to improve the collision detection and fix all of these issues. I’ve implemented the GJK/EPA collision detection algorithms as well as a completely new contact point generation algorithm. After struggling on and off for a few months with a number of issues (getting stuck in infinite recursion/loops, issues with caching, issues with floating point precision, suboptimal performance, etc… ugh), it finally seems to be working to my expectations! The performance is about the same as my old SAT based collision code, maybe 10% faster or slower in some cases. Segment to segment collisions work perfectly as do beveled polygons. Smoothed terrain collisions are even working, although the API to define them is a little awkward right now.

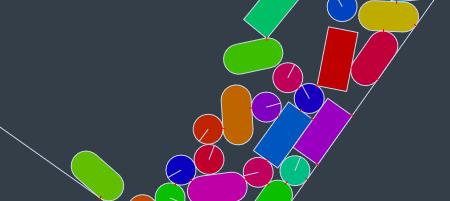

_Beveled line segments colliding with other shapes!_

_Beveled line segments colliding with other shapes!_

GJK:

The aptly named Gilbert–Johnson–Keerthi algorithm is what calculates the closest points between two convex shapes. My implementation preserves the winding of the vertexes it returns which helps avoid precision issues when calculating the separating axis of shapes that are very close together or touching exactly. With the correct winding, you can assume the edge you are given lies along a contour in the gradient of the distance field of the minkowski difference. I’ve also modified the bounding box tree to cache GJK solution. Then in the next frame, you can use that as the starting point. It’s a classic trick that makes GJK generally require only a single iteration per pair of colliding objects.

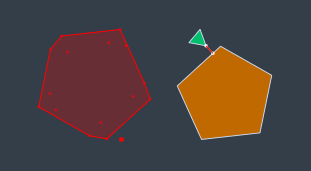

_GJK is rather abstract, but it calculates the closest points between two shapes by finding the distance between the minkowski difference of two shapes (the red polygon) and the origin (the big red dot). If you look closely, the red shape is a flipped version of the pentagon with the little triangle tacked on to all it's corners. It's one of the coolest and most bizarre algorithms I've ever seen. 😀 I'll probably make a blog entry about my implementation eventually too._

_GJK is rather abstract, but it calculates the closest points between two shapes by finding the distance between the minkowski difference of two shapes (the red polygon) and the origin (the big red dot). If you look closely, the red shape is a flipped version of the pentagon with the little triangle tacked on to all it's corners. It's one of the coolest and most bizarre algorithms I've ever seen. 😀 I'll probably make a blog entry about my implementation eventually too._

EPA:

EPA stands for Erik-Peterson-Anders… Nah, it stands for Expanding Polytope Algorithm and is a very close cousin to GJK. While GJK can detect the distance between two shapes, EPA is what you can use to find the minimum separating axis when they are overlapping. It’s sort of the opposite of the closest points. It gives you the distance and direction to slide the two shapes to bring them apart (as well as which points on the surface will be touching). It’s not quite as efficient as GJK and it’s an extra step to run which has the interesting effect of making collision detection of beveled polygons more efficient than regular hard edged ones. This is one thing I’m not completely happy with. Polygon heavy simulations will generally run slower than with 6.1 unless you enable some beveling. It’s not a lot slower, but I don’t like taking steps backwards. On the other hand, it will be much easier to apply SIMD to the hotspots shared by the GJK/EPA code than my previous SAT based code.

Contact Points:

Having new collision detection algorithms is neat and all, but that didn’t solve the biggest (and hardest) issue; my contact point generation algorithm sucked! Actually, it worked pretty good. It has stayed mostly unchanged for 6 years now, but it has also accumulated some strange workarounds for rare conditions that it didn’t handle well. The workarounds sometimes produced strange results (like too many contact points or weird normals…). They also made it practically impossible to add the smoothed terrain collision feature.

In the new collision detection code, polygon to polygon and polygon to segment collisions are treated exactly the same. They pass through the same GJK/EPA steps and end up being passed to the same contact handling code as a pair of colliding edges. It handles collisions with endcaps nicely, always generates either 1 or 2 contact points for the pair, and uses the minimum separating axis for the normals. It’s all very predictable and made the smoothed terrain collisions go pretty easily. It only took me about 10 iterations of ideas for how to calculate the contact points before I got something I was happy with. -_- It really ended up being much, much harder than I expected for something that seems so simple in concept.

The implementation works somewhat similarly to Erin Catto’s contact clipping algorithm, although adding support for beveling (without causing popping contacts) and contact id’s made it quite a bit harder. There are some pretty significant differences now. Perhaps that is another good post for another day.

Coming to a Branch Near You!

The branch is on GitHub here if you want to take a peek and poke around. It’s been a pretty big change, and hasn’t been extensively tested yet. I wouldn’t recommend releasing anything based on it quite yet, but it should be plenty stable for development work, and should even fix a number of existing issues. I’d certainly appreciate feedback!